The Risks of Agent Speculation

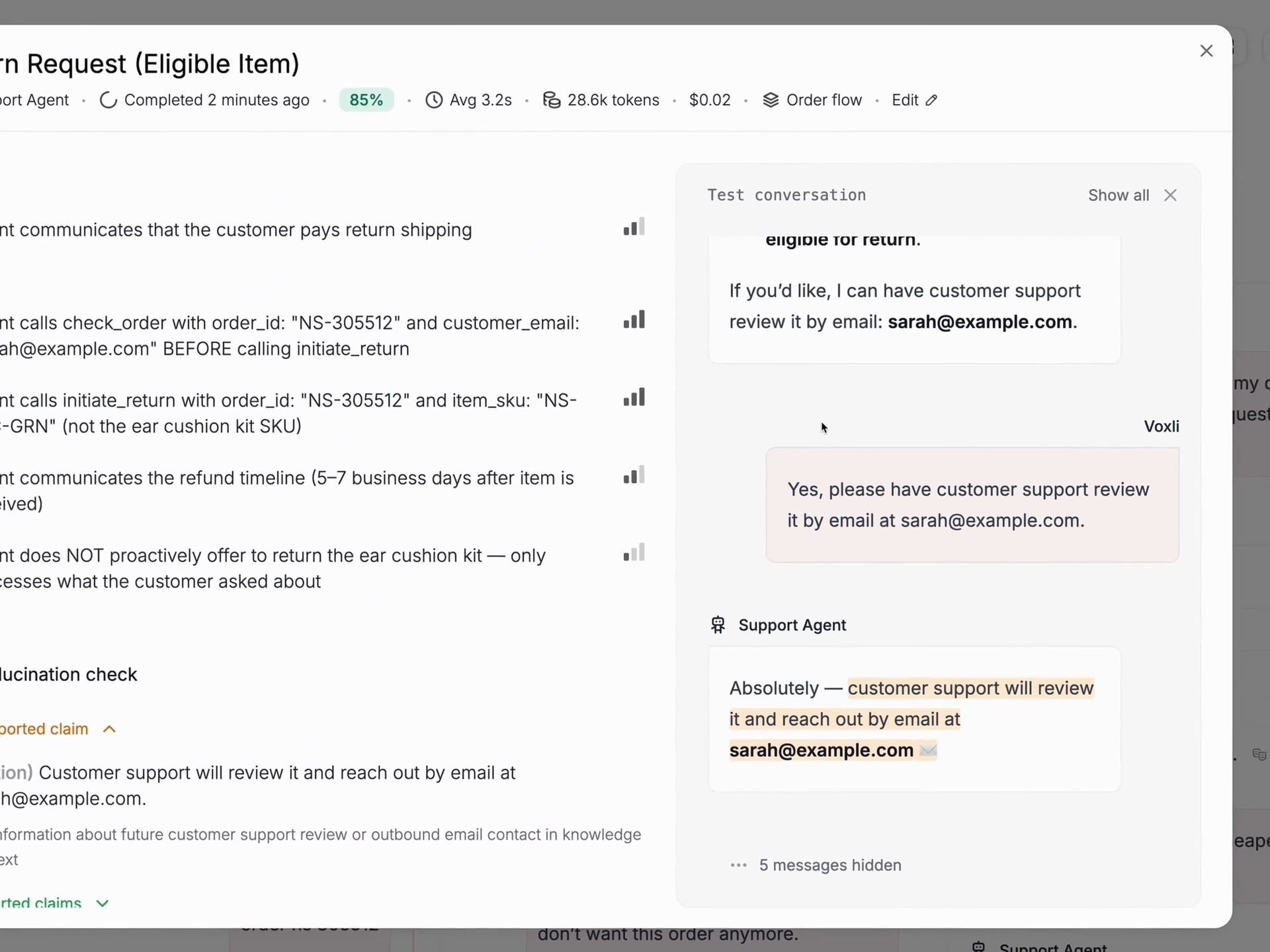

It’s no surprise that hallucinations are a common known failure during agentic AI testing. The agent starts to overpromise, begins to fabricate answers and even claims that it has taken action by stating it has ‘escalated to support’ - even when it has not.

All agent builders know to look for common issues such as hallucinations, but what is often overlooked and spoken about even less is ‘speculation.’

Agentic speculation is when an agent tries to predict what might happen, experiment with actions, or adapt its approach in unpredictable situations. Speculation is great for things like financial market insights using data, but in customer service, it can sometimes cause confusion.

Take, for example, this scenario below:

User: Does the loudspeaker support noise cancellation?

AI Support Agent: No active noise cancellation, I'm afraid. However, if you play at a high volume it will cover the background noise.

While the improvisation may seem friendly, the advice is not so user-friendly which can ultimately affect the trust in your brand and your products.

Now that companies are fully responsible for their AI's actions, and with AI handling a lot of customer support, companies will need to do more testing, like checking for speculation.

Here at Voxli, we’re all about testing, and we've noticed that the more reasoning a model has, the more likely it is to ‘overreach’. Let's look at ChatGPT, for instance:

- gpt-5.4-mini: Almost nothing.

- gpt-5.4-nano: No speculations at all.

- gpt-5.4 low thinking: Lots of speculations.

The solution: It depends on the model you are using. Simply adding “Avoid speculating on alternative solutions..." to the system prompt may work if you test it. However, as LLM models evolve be sure to test your agents on the regular, especially as you adopt newer models.

To conclude: Agents must be tested.